|

Diffusion-based methods have demonstrated remarkable capabilities in generating a diverse array of high-quality images,

sparking interests for styled avatars, virtual try-on, and more. Previous methods use the same reference image as the

target. An overlooked aspect is the leakage of the target's spatial information, style, etc. from the reference,

harming the generated diversity and causing shortcuts. However, this approach continues as widely available datasets

usually consist of single images not grouped by identities, and it is expensive to recollect large-scale same-identity

data. Moreover, existing metrics adopt decoupled evaluation on text alignment and identity preservation, which fail at

distinguishing between balanced outputs and those that over-fit to one aspect.

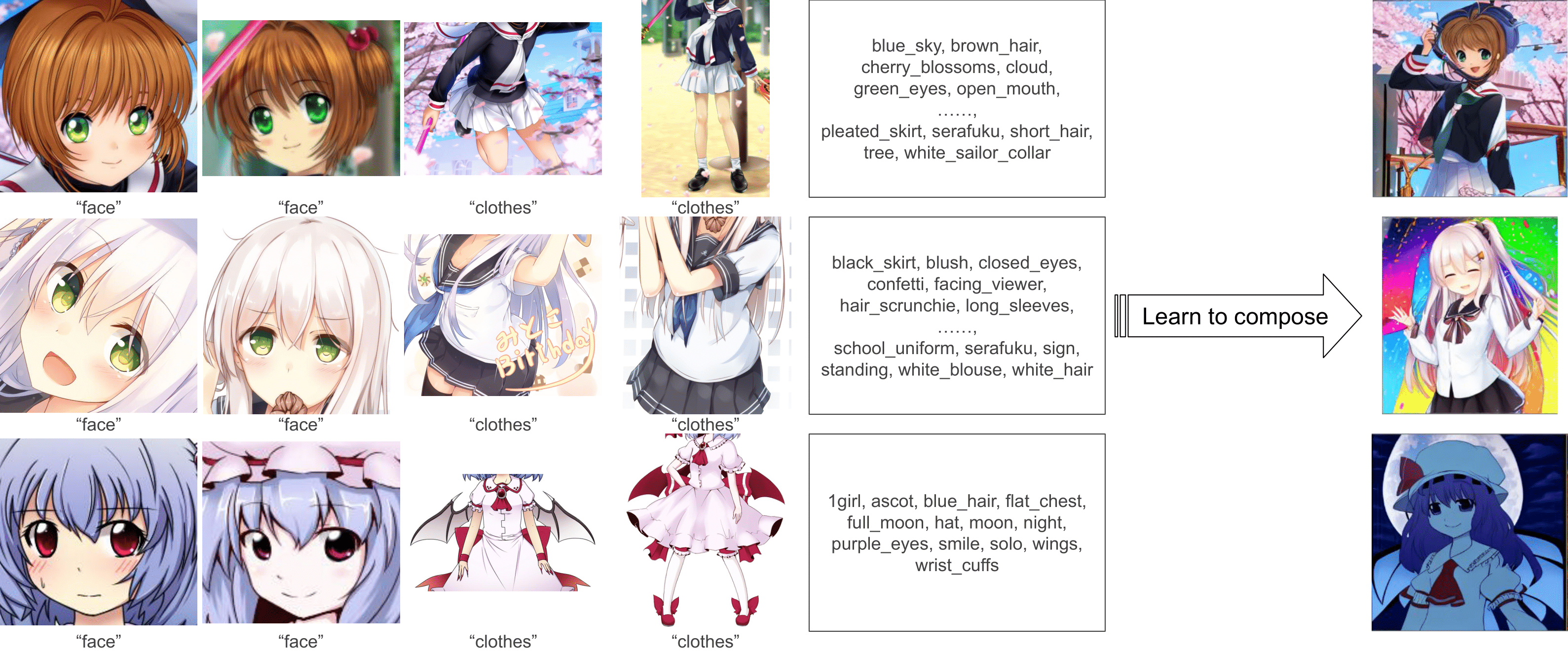

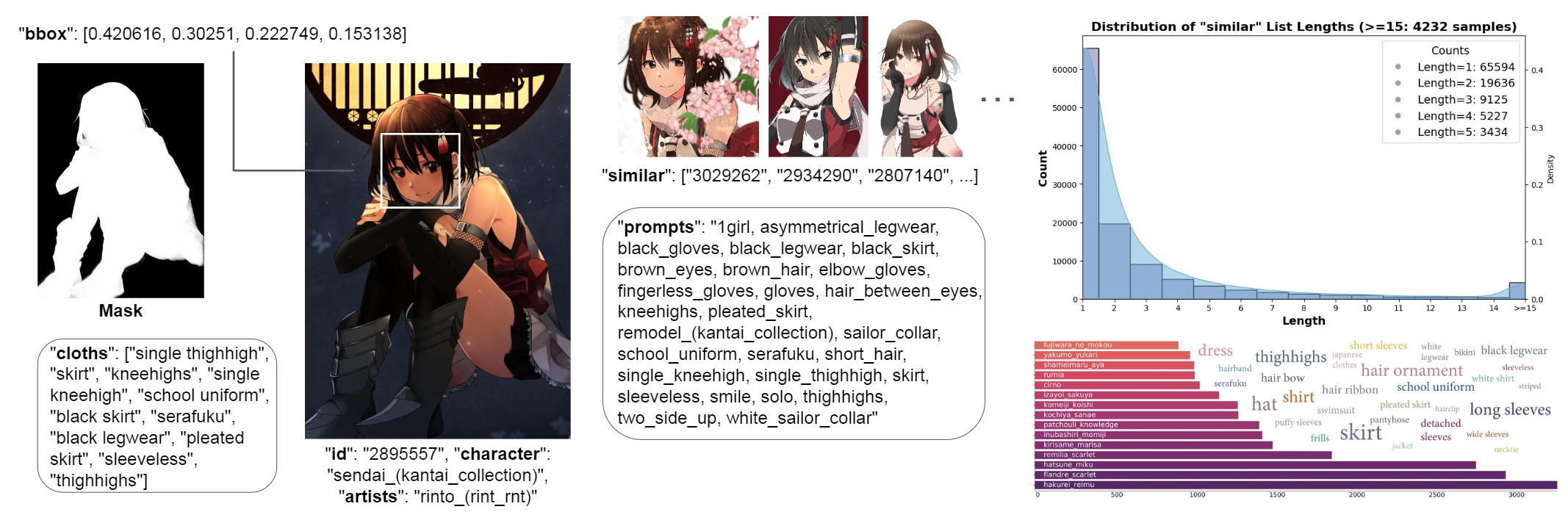

In this paper, we propose a multi-level, same-identity dataset RetriBooru, which groups anime characters by both face

and cloth identities. RetriBooru enables adopting reference images of the same character and outfits as the target,

while keeping flexible gestures and actions. We benchmark previous methods on our dataset, and demonstrate the

effectiveness of training with a reference image different from target (but same identity). We introduce a new concept

composition task, where the conditioning encoder learns to retrieve different concepts from several reference images,

and modify a baseline network RetriNet for the new task. Finally, we introduce a novel class of metrics named Similarity

Weighted Diversity (SWD), to measure the overlooked diversity and better evaluate the alignment between similarity and

diversity.

|